It’s tough to deploy OpenStack as it is, but the reasons it can fail aren’t always with the technology itself, said Christian Carrasco, cloud advisor at Tapjoy, at his OpenStack Days Silicon Valley presentation last month.

With Tapjoy, a leading mobile app monetization company with over 2 million daily engagements, 270,000 active apps and more 500 million users/devices, Carrasco has been able to deploy a cloud using OpenStack that takes advantage of public, private, and niche cloud infrastructure to keep things running smoothly.

The common reasons for failed OpenStack deployments goes far beyond the technology issues, Carrasco said.

Leave your dog(ma) at home

The community of engineers are no less susceptible to having counterproductive beliefs around cloud computing. These dogmatic views, earned from bad technology experiences, PTSD-inducing 3 A.M. support calls, and the rebranding of old and fake technology by unscrupulous vendors, act as blinders for teams looking to implement OpenStack and cloud computing projects.

OpenStack may not be for everyone, said Carrasco, but keeping an open mind to the paradigm shift will benefit many companies.

“We tried OpenStack back in 2011 and it was not ready for primetime,” he said. “You go forward to 2016, and it’s really rock-solid.”

Not Elmer FUDD (fear uncertainty doubt doom)

When Linux came out, said Carrasco, there was a massive campaign against it based on the fear and uncertainty surrounding its newness. OpenStack faces a similar perception in the larger technology community today, in independently published articles, promoted by commercial entities, and skewed statistical reports.

“OpenStack is not here to die,” said Carrasco. “it’s here to grow.”

You picked the wrong trunk

It’s easy to head to the OpenStack website and download any one of the base repositories available there. The problem is, according to Carrasco, that there’s no real, quick path to the correct set of modules and tweaks that are needed by a given business. Just like community Linux trunks, which have some configurations tweaked, but are not always for less experienced engineers.

“So we try OpenStack, we get the trunks, and all of a sudden we have a terrible experience,” he said. “We have to be careful how we try OpenStack.”

You are not a full-stack engineer

In fact, said Carrasco, no one really is. It’s incredibly difficult to be highly proficient at any one of the many specific technologies involved in implementing a solid cloud computing infrastructure, including hardware, networking, storage, data-centers, databases, load-balancers, routing, high-availability, security, and virtualization. Engineers must also be proficient in their own software stack.

“We have to be careful to realize that we don’t have those skills the entire time,” said Carrasco.

OpenStack is not a better buggy

OpenStack is actually an entirely different paradigm. Teams looking to OpenStack as a replacement for their current technology will have trouble. OpenStack, said Carrasco, isn’t just a cheaper Hypervisor, and not just an open source way of doing business. Cloud assets aren’t always IT assets (though they can also be just that). OpenStack might even not be ideal for more traditional businesses, either.

“OpenStack methodology is really shifting,” said Carrasco, “and the shift has not completed yet.”

Don’t go it alone

Managing datacenter technology can be tough on a small team.

“If it’s your first time around with this technology,” said Carrasco, “it’s going to be a challenge.”

Teams are going to need assistance with design and architecture services, self-service or automated tools, stack verification, and semi- or fully-managed services, as well as figuring out a full hardware and software stack. There are many options teams can use to get help.

Many teams aren’t at the right stage to implement OpenStack. They might be trying out a few different minimally viable products to see what works best; it makes no economic sense to go out and buy hardware, get data center contracts, and hire an entire cloud team. OpenStack is ideal for teams that are already online, moving online, or ready to stop using “buggies.”

How OpenStack succeeds

Carrasco wasn’t all doom and gloom, however, during his presentation. OpenStack can and does succeed within a wide range of companies who use it for a diverse and powerful set of uses.

Where’s your Cloud officer?

“In all companies, we have the chief finance officer, chief product officer, chief security officer, and so on and so forth,” said Carrasco. “Where’s your cloud officer?”

Most big technology projects only succeed when there is real ownership at the organizational level. Orphaned clouds are risky for security and a headache for project managers, and are easily vaporized when they’re left to die, which represents a huge waste of resource investment. In addition, a solid leader for Cloud projects in an organization will prevent vendor lock-in.

Vendors need to get out of their own clouds

Right now, private and public cloud vendors are fiercely protecting their territory. Everyone is looking for creative ways to lock us into their own proprietary way of doing things. Interoperability is key to making sure that your assets remain with your company, and not locked in to specific, expensive vendor solutions.

“There are very few companies coming up with creative ways to let us out,” said Carrasco. “You can check into your hotel, but you can never leave.”

Look at the bigger picture

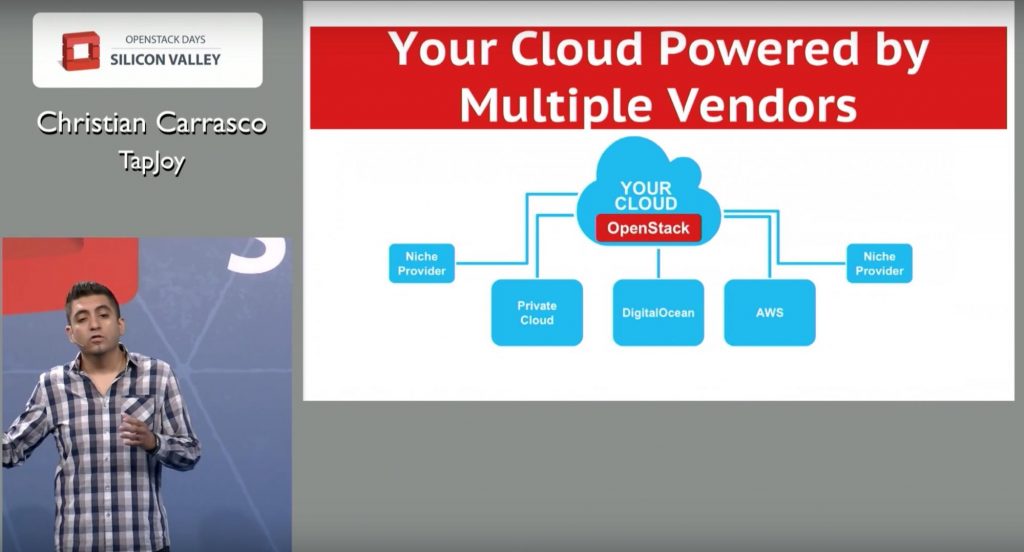

Many teams try to figure out cloud computing based on the specific technologies and clouds available. They’ll decide to go with a public cloud, a private cloud, a container solution, or even virtualization and end up having to make compromises based on that specific technology. It’s better to have options, said Carrasco, and those shouldn’t be limited to one platform or another.

Teams need to look at their own cloud, with OpenStack in the center to orchestrate, and then choose a variety of solutions, including private clouds, DigitalOcean services, Amazon Web Services (AWS), or any one of a number of niche providers to power it. This will allow companies to take back control of their cloud and run it wherever—and whenever—it wants.

“It’s no longer about public or private cloud,” said Carrasco, “but about who we want to power our cloud assets.

Carrasco calls this concept the Hyper-Converged Cloud. It allows for companies to own their own cloud that is deployed across multiple vendors, both private and public, and can consume niche services. By doing this, a bigger market will emerge.

Create interoperability standards

When PCs were first created, the key ingredient for its explosive growth and success included competitors getting together and building components for each other. A set of interoperability standards were created and a market grew. The same happened for the Internet, streaming media, mobile technologies, the automobile and airline industry, and healthcare, just to name a few.

In order to hyper-converge the cloud, the community must collaborate, coming together to define these standards. it’s not too late, said Carrasco, to create standards. The current cloud computing industry is still really small, especially when compared to the technology industry, or even industry in general.

Instead of trying to re-create what has come before, said Carrasco, the cloud industry should take advantage of its relative youth.

“Let’s stop trying to build a better buggy,” said Carrasco, “and really focus on making a better next-generation cloud.”

Cover Photo // CC BY NC

- Yes it blends: Vanilla Forums and private clouds - November 5, 2018

- How Red Hat and OpenShift navigate a hybrid cloud world - July 25, 2018

- Airship: Making life cycle management repeatable and predictable - July 17, 2018

)