Kata containers are containers that use hardware virtualization technologies for workload isolation almost without performance penalties. Top use cases are untrusted workloads and tenant isolation (for example in a shared Kubernetes cluster). This blog post describes how to run Percona Kubernetes Operator for Percona XtraDB Cluster (PXC Operator) using Kata containers.

Prepare Your Kubernetes Cluster

Setting up Kata containers and Kubernetes is well documented in the official github repo (cri-o, containerd, Kubernetes DaemonSet). We will just cover the most important steps and pitfalls.

Virtualization Support

First of all, remember that Kata containers require hardware virtualization support from the CPU on the nodes. To check if your linux system supports it run on the node.

$ egrep ‘(vmx|svm)’ /proc/cpuinfoVMX (Virtual Machine Extension) and SVM (Secure Virtual Machine) are Intel and AMD features that add various instructions to allow running a guest OS with full privileges, but still keeping host OS protected.

For example, on AWS only i3.metal and r5.metal instances provide VMX capability.

Containerd

Kata containers are OCI (Open Container Interface) compliant, which means that they work pretty well with CRI (Container Runtime Interface) and hence well supported by Kubernetes. To use Kata containers please make sure your Kubernetes nodes run using CRI-O or containerd runtimes.

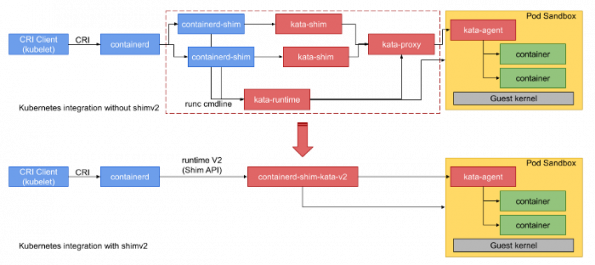

The image below describes pretty well how Kubernetes works with Kata.

Hint: GKE or kops allows you to start your cluster with containerd out of the box and skip manual steps.

Setting Up Nodes

To run Kata containers, k8s nodes need to have kata-runtime installed and runtime configured properly. The easiest way is to use DaemonSet which installs required packages on every node and reconfigures containerd. As a first step apply the following yamls to create the DaemonSet:

$ kubectl apply -f https://raw.githubusercontent.com/kata-containers/packaging/master/kata-deploy/kata-rbac/base/kata-rbac.yaml

$ kubectl apply -f https://raw.githubusercontent.com/kata-containers/packaging/master/kata-deploy/kata-deploy/base/kata-deploy.yamlDaemonSet reconfigures containerd to support multiple runtimes. It does that by changing /etc/containerd/config.toml. Please note that some tools (ex. kops) keep containerd in a separate configuration file config-kops.toml. You need to copy the configuration created by DaemonSet to the corresponding file and restart containerd.

Create runtimeClasses for Kata. RuntimeClass is a feature that allows you to pick runtime for the container during its creation. It has been available since Kubernetes 1.14 as Beta.

$ kubectl apply -f https://raw.githubusercontent.com/kata-containers/packaging/master/kata-deploy/k8s-1.14/kata-qemu-runtimeClass.yamlEverything is set. Deploy test nginx pod and set the runtime:

$ cat nginx-kata.yaml

apiVersion: v1

kind: Pod

metadata:

name: nginx-kata

spec:

runtimeClassName: kata-qemu

containers:

- name: nginx

image: nginx

$ kubectl apply -f nginx-kata.yaml

$ kubectl describe pod nginx-kata | grep “Container ID”

Container ID: containerd://3ba8d62be5ee8cd57a35081359a0c08059cf08d8a53bedef3384d18699d13111On the node verify if Kata is used for this container through ctr tool:

# ctr --namespace k8s.io containers list | grep 3ba8d62be5ee8cd57a35081359a0c08059cf08d8a53bedef3384d18699d13111

3ba8d62be5ee8cd57a35081359a0c08059cf08d8a53bedef3384d18699d13111 sha256:f35646e83998b844c3f067e5a2cff84cdf0967627031aeda3042d78996b68d35 io.containerd.kata-qemu.v2cat Runtime is showing kata-qemu.v2 as requested.

The current latest stable PXC Operator version (1.6) does not support runtimeClassName. It is still possible to run Kata containers by specifying io.kubernetes.cri.untrusted-workload annotation. To ensure containerd supports this annotation add the following into the configuration toml file on the node:

# cat <> /etc/containerd/config.toml

[plugins.cri.containerd.untrusted_workload_runtime]

runtime_type = "io.containerd.kata-qemu.v2"

EOF

# systemctl restart containerdInstall the Operator

We will install the operator with regular runtime but will put the PXC cluster into Kata containers.

Create the namespace and switch the context:

$ kubectl create namespace pxc-operator

$ kubectl config set-context $(kubectl config current-context) --namespace=pxc-operatorGet the operator from github:

$ git clone -b v1.6.0 https://github.com/percona/percona-xtradb-cluster-operatorDeploy the operator into your Kubernetes cluster:

$ cd percona-xtradb-cluster-operator

$ kubectl apply -f deploy/bundle.yamlNow let’s deploy the cluster, but before that, we need to explicitly add an annotation to PXC pods and mark them untrusted to enforce Kubernetes to use Kata containers runtime. Edit deploy/cr.yaml:

pxc:

size: 3

image: percona/percona-xtradb-cluster:8.0.20-11.1

…

annotations:

io.kubernetes.cri.untrusted-workload: "true"Now, let’s deploy the PXC cluster:

$ kubectl apply -f deploy/cr.yamlThe cluster is up and running (using 1 node for the sake of experiment):

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

pxc-kata-haproxy-0 2/2 Running 0 5m32s

pxc-kata-pxc-0 1/1 Running 0 8m16s

percona-xtradb-cluster-operator-749b86b678-zcnsp 1/1 Running 0 44mIn crt output you should see percona-xtradb cluster running using Kata runtime:

# ctr --namespace k8s.io containers list | grep percona-xtradb-cluster | grep kata

448a985c82ae45effd678515f6cf8e11a6dfca159c9abf05a906c7090d297cba docker.io/percona/percona-xtradb-cluster:8.0.20-11.2 io.containerd.kata-qemu.v2We are working on adding the support for runtimeClassName option for our operators. The support of this feature enables users to freely choose any container runtime.

Conclusions

Running databases in containers is an ongoing trend and keeping data safe is always the top priority for a business. Kata containers provide security isolation through mature and extensively tested qemu virtualization with little-to-none changes to the existing environment.

Deploy Percona XtraDB Cluster with ease in your Kubernetes cluster with our Operator and Kata containers for better isolation without performance penalties.

This article was originally posted on percona.com/blog. See the original article here.

)