Open Virtual Network (OVN) is a relatively new networking technology that provides a powerful and flexible software implementation of standard networking functionalities such as switches, routers, firewalls, etc.

Importantly, OVN is distributed in the sense that the aforementioned network entities can be realized over a distributed set of compute/networking resources. OVN is tightly coupled with OVS, essentially being a layer of abstraction which sits above a set of OVS switches and realizes the above networking components across these switches in a distributed manner.

A number of cloud computing platforms and more general compute resource management frameworks are working on OVN support, including oVirt, OpenStack, Kubernetes and Openshift – progress on this front is quite advanced. Interestingly and importantly, one dimension of the OVN vision is that it can act as a common networking substrate which could facilitate integration of more than one of the above systems, although the realization of that vision remains future work.

In the context of our work on developing an edge computing testbed, we set up a modest OpenStack cluster to emulate functionality deployed within an enterprise data center with OVN providing network capabilities to the cluster. This blog post provides a brief overview of the system architecture and notes some issues we had getting it up and running.

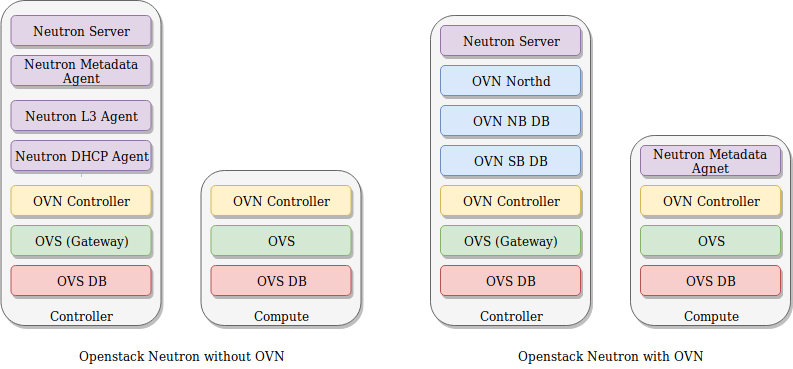

As our system is not a production system, providing high availability (HA) support was not one of the requirements; consequently, it was not necessary to consider HA OVN mode. As such, it was natural to host the OVN control services, including the Northbound and Southbound DBs and the Northbound daemon (ovn-northd) on the OpenStack controller node. Because is the node through which external traffic goes, we also needed to run an external facing OVS on this node which required its own OVN controller and local OVS database. Further, as this OVS chassis is intended for external traffic, it needed to be configured with ‘enable-chassis-as-gw‘.

We configured our system to use DHCP provided by OVN; consequently the Neutron DHCP agent was no longer necessary, we removed this process from our controller node. Similarly, L3 routing was done within OVN meaning that the neutron L3 agent was no longer necessary. OpenStack metadata support is implemented differently when OVN is used: instead of having a single metadata process running on a controller serving all metadata requests, the metadata service is deployed on each node and the OVS switch on each node routes requests to 169.254.169.254 to the local metadata agent; this then queries the nova metadata service to obtain the metadata for the specific VM.

The services deployed on the controller and compute nodes are shown in Figure 1 below.

Figure 1: Neutron containers with and without OVN

We used Kolla to deploy the system. Kolla does not currently have full support for OVN; however, specific Kolla containers for OVN have been created (e.g. kolla/ubuntu-binary-ovn-controller:queens, kolla/ubuntu-binary-neutron-server-ovn:queens). Hence, we used an approach which augments the standard Kolla-ansible deployment with manual configuration of the extra containers necessary to get the system running on OVN.

As always, many smaller issues were encountered while getting the system working – we won’t detail all these issues here, but rather focus on the more substantive issues. We divide these into three specific categories: OVN parameters which need to be configured, configuration specifics for the Kolla OVN containers and finally a point which arose due to assumptions made within Kolla that do not necessarily hold for OVN.

To enable OVN, it was necessary to modify the configuration of the OVS switches operating on all the nodes; the existing OVS containers and OVSDB could be used for this – the OVS version shipped with Kolla/Queens is v2.9.0 – but it was necessary to modify some settings. First, it was necessary to configure system-ids for all of the OVS chassis’ – we chose to select fixed UUIDs a priori and use these for each deployment such that we had a more systematic process for setting up the system but it’s possible to use a randomly generated UUID.

docker exec -ti openvswitch_vswitchd ovs-vsctl set open_vswitch . external-ids:system-id="$SYSTEM_ID"

On the controller node, it was also necessary to set the following parameters:

docker exec -ti openvswitch_vswitchd ovs-vsctl set Open_vSwitch . \

external_ids:ovn-remote="tcp:$HOST_IP:6642" \

external_ids:ovn-nb="tcp:$HOST_IP:6641" \

external_ids:ovn-encap-ip=$HOST_IP external_ids:ovn-encap type="geneve" \

external-ids:ovn-cms-options="enable-chassis-as-gw"

docker exec openvswitch_vswitchd ovs-vsctl set open . external-ids:ovn-bridge-mappings=physnet1:br-ex

On the compute nodes this was necessary:

docker exec -ti openvswitch_vswitchd ovs-vsctl set Open_vSwitch . \

external_ids:ovn-remote="tcp:$OVN_SB_HOST_IP:6642" \

external_ids:ovn-nb="tcp:$OVN_NB_HOST_IP:6641" \

external_ids:ovn-encap-ip=$HOST_IP \

external_ids:ovn-encap-type="geneve"

Having changed the OVS configuration on all the nodes, it was then necessary to get the services operational on the nodes. There are two specific aspects to this: modifying the service configuration files as necessary and starting the new services in the correct way.

Not many changes to the service configurations were required. The primary changes related to ensuring the the OVN mechanism driver was used and letting neutron know how to communicate with OVN. We also used the geneve tunnelling protocol in our deployment and this required the following configuration settings:For the neutron server OVN container

ml2_conf.inimechanism_drivers = ovn type_drivers = local,flat,vlan,geneve tenant_network_types = geneve [ml2_type_geneve] vni_ranges = 1:65536 max_header_size = 38 [ovn] ovn_nb_connection = tcp:172.30.0.101:6641 ovn_sb_connection = tcp:172.30.0.101:6642 ovn_l3_scheduler = leastloaded ovn_metadata_enabled = true

neutron.confcore_plugin = neutron.plugins.ml2.plugin.Ml2Plugin service_plugins = networking_ovn.l3.l3_ovn.OVNL3RouterPlugin

For the metadata agent container (running on the compute nodes) it was necessary to configure it to point at the nova metadata service with the appropriate shared key as well as how to communicate with OVS running on each of the compute nodes

nova_metadata_host = 172.30.0.101 metadata_proxy_shared_secret = <SECRET> bridge_mappings = physnet1:br-ex datapath_type = system ovsdb_connection = tcp:127.0.0.1:6640 local_ip = 172.30.0.101

For the OVN specific containers – ovn-northd, ovn-sb and ovn-nb databases, it was necessary to ensure that they had the correct configuration at startup; specifically, that they knew how to communicate with the relevant dbs. Hence, start commands such as

/usr/sbin/ovsdb-server /var/lib/openvswitch/ovnnb.db -vconsole:emer -vsyslog:err -vfile:info --remote=punix:/run/openvswitch/ovnnb_db.sock --remote=ptcp:$ovnnb_port:$ovsdb_ip --unixctl=/run/openvswitch/ovnnb_db.ctl --log-file=/var/log/kolla/openvswitch/ovsdb-server-nb.log

were necessary (for the ovn northbound database) and we had to modify the container start process accordingly.

It was also necessary to update the neutron database to support OVN specific versioning information: this was straightforward using the following command:

docker exec -ti neutron-server-ovn_neutron_server_ovn_1 neutron-db-manage upgrade heads

The last issue which we had to overcome was that Kolla and neutron OVN had slightly different views regarding the naming of the external bridges. Kolla-ansible configured a connection between the br-ex and br-int OVS bridges on the controller node with port names phy-br-ex and int-br-ex respectively. OVN also created ports with the same purpose but with different names patch-provnet-<UUID>-to-br-int and patch-br-int-to-provonet-<UUID>; as these ports had the same purpose, our somewhat hacky solution was to manually remove the the ports created in the first instance by Kolla-ansible.

Having overcome all these steps, it was possible to launch a VM which had external network connectivity and to which a floating IP address could be assigned.

Clearly, this approach is not realistic for supporting a production environment, but it’s an appropriate level of hackery for a testbed.

Other noteworthy issues which arose during this work include the following:

- Standard Docker apparmor configuration in Ubuntu is such that

mountcannot be run inside containers, even if they have the appropriate privileges. This has to be disabled or else it is necessary to ensure that the containers do not use the default docker apparmor profile. - A specific issue with mounts inside a container which resulted in the mount table filling up with 65,536 mounts and rendering the host quite unusable (thanks to Stefan for providing a bit more detail on this) – the workaround was to ensure that /run/netns was bind mounted into the container.

- As we used geneve encapsulation, geneve kernel modules had to be loaded

- Full datapath NAT support is only available for linux kernel 4.6 and up. We had to upgrade the 4.4 kernel which came with our standard ubuntu 16.04 environment.

This is certainly not a complete guide to how to get OpenStack up and running with OVN, but may be useful to some folks who are toying with this. In future, we’re going to experiment with extending OVN to an edge networking context and will provide more details as this work evolves.

This post first appeared on the blog for the ICCLab (Cloud Computing Lab) and the SPLab (Service Prototyping Lab) of the ZHAW Zurich University of Applied Sciences department.

Superuser is always interested in tutorials about open infrastructure, get in touch at editorATopenstack.org.

- Exploring the Open Infrastructure Blueprint: Huawei Dual Engine - September 25, 2024

- Open Infrastructure Blueprint: Atmosphere Deep Dive - September 18, 2024

- Datacomm’s Success Story: Launching A New Data Center Seamlessly With FishOS - September 12, 2024

)