What Is Istio?

Istio is an open-source service mesh that provides a uniform way to connect, manage, and secure microservices. It acts as a layer between the application and the network, providing traffic management, security, and observability.

Istio makes it easier to manage traffic flows, enforce access policies, and collect telemetry data without modifying application code. It supports multiple platforms and works with various container orchestration systems such as Kubernetes, Mesos and Nomad.

Istio provides features such as circuit breaking, load balancing, mutual TLS authentication, and distributed tracing. It also allows for canary deployments and A/B testing, making it easier to roll out new features and updates.

How Istio Works

Istio works by adding a service mesh layer between the application and the network. It provides a data plane and control plane to handle traffic management, security, and observability for microservices.

The data plane consists of a set of intelligent proxies (Envoy) deployed as sidecars to each microservice instance. These proxies intercept all inbound and outbound traffic to the microservice and provide features such as load balancing, circuit breaking, and service discovery. They also encrypt communication between services, enforce access control policies, and collect telemetry data for monitoring and tracing purposes.

The control plane manages and configures the data plane proxies. Today, Istiod combines what used to be four separate components:

- Pilot: The service discovery and traffic management component that configures the Envoy proxies with routing rules and load-balancing policies. Pilot also provides features such as fault injection and canary testing to test new services or changes before they are rolled out to production.

- Citadel: The security component that manages certificate issuance, rotation, and revocation for service-to-service communication. Citadel provides mutual TLS authentication between services, ensuring that only authorized services can communicate with each other.

- Mixer: The policy enforcement and telemetry collection component that enforces access control policies, collects telemetry data, and reports on service-level objectives (SLOs). Mixer can also trigger actions based on events, such as sending alerts or blocking traffic to a service.

- Galley: The configuration validation and management component that validates the configuration files used by the control plane and updates the running configuration on the Envoy proxies.

What Are the Benefits of Deploying Istio on OpenStack?

Deploying Istio on OpenStack can provide several benefits for organizations that are running microservices-based applications. Here are some of the key benefits:

- Simplified management: OpenStack provides a unified platform for managing infrastructure resources, including compute, storage, and networking. By deploying Istio on OpenStack, organizations can simplify the management of their microservices-based applications, by automating the provisioning and scaling of infrastructure resources.

- Improved security: OpenStack provides a range of security features, including network segmentation, encryption, and role-based access control. By deploying Istio on OpenStack, organizations can further improve the security of their microservices-based applications, by using Istio’s security features, such as mutual TLS authentication and encryption of service communication.

- Enhanced visibility: OpenStack provides a range of monitoring and logging capabilities, which can help organizations to gain insights into the behavior of their infrastructure and applications. By deploying Istio on OpenStack, organizations can further enhance their visibility, by using Istio’s observability features, such as distributed tracing, metrics collection, and logging. Better visibility can also promote agile practices like continuous documentation.

- Increased performance: OpenStack provides a range of performance optimization features, including network virtualization, load balancing, and auto-scaling. By deploying Istio on OpenStack, organizations can further improve the performance of their microservices-based applications, by using Istio’s traffic management and load balancing features, such as intelligent routing and canary releases.

How to Deploy Istio on OpenStack?

Here are the general steps for deploying Istio on OpenStack:

1. Prepare your OpenStack environment: Ensure that your OpenStack environment is properly set up, with the necessary compute, storage, and networking resources to support your microservices-based applications.

2. Install Kubernetes: Install Kubernetes on top of OpenStack, using a Kubernetes distribution such as OpenShift or Kubespray. This will provide a container orchestration platform to manage your microservices.

3. Install Istio: Install Istio on top of Kubernetes, using the Istio operator or Helm charts. The Istio operator is the recommended way to install Istio on Kubernetes, as it provides a more streamlined and automated installation process. You can install the Istio operator using the following command:

On Linux, install Istio using the following command:

curl -L https://istio.io/downloadIstio | sh - If current version is istio-1.17.1:

Then change into that folder using the following command:

cd istio-1.17.1

You will see a bin folder inside – this folder contains a binary for istioctl. Change into the bin folder and then set the PATH environment variable – so that istioctl is universally accessible on the server.

export PATH=$PWD/bin:$PATH

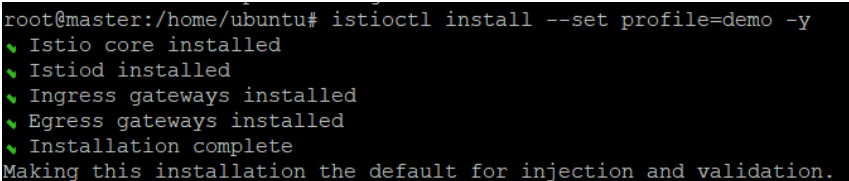

4. Configure Istio: Once Istio is installed, configure it to work with your microservices-based applications. This includes defining service meshes, setting up ingress and egress gateways, and configuring traffic management policies. You can configure Istio using the Istioctl command-line tool using the following command:

Istioctl install --set profile=demo -y

The output looks like this:

5. Test and deploy microservices: Test your microservices with Istio to ensure that they are working as expected. Deploy your microservices to the Kubernetes cluster, and configure them to use Istio for traffic management and security. You can use istioctl to manually inject the Istio sidecar into your microservices, or have it automatically applied for an entire namespace.

When you set the istio-injection=enabled label on a namespace, and the injection webhook is enabled, any new pods that are created in that namespace will automatically have a sidecar added to them.

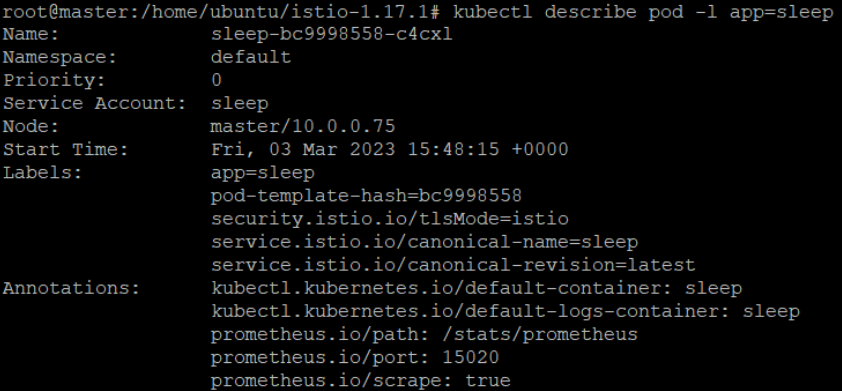

Note that, unlike manual injection, automatic injection occurs at the pod level. You won’t see any change to the deployment itself. Instead, you’ll want to check individual pods (via kubectl describe) to see the injected proxy. Learn more in the Istio documentation.

The following command will show you if Istio is correctly applied to your pods:

kubectl describe pod -l app=sleep

The output will look something like this:

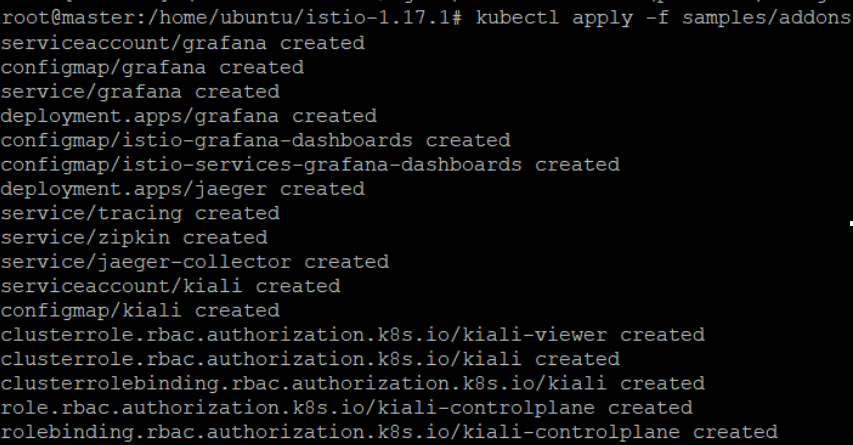

6. Monitor and manage Istio: Use Istio’s observability features to monitor the behavior of your microservices and identify any issues or performance bottlenecks. Use Istio’s management features to manage the deployment of new microservices, as well as to update and scale existing ones. You can use the Istio dashboard to monitor and manage Istio.

We will need to first install some add-ons to see the Kiali dashboard. Browse to the folder where you have downloaded Istio – Kiali is an addon located in the samples folder.

You install it using the following command:

Kubectl apply -f samples/addons

Then, you can run the dashboard using this command:

istioctl dashboard kiali

![]()

Conclusion

Deploying Istio on OpenStack can provide a powerful solution for managing and securing microservices. Istio’s service mesh architecture can help simplify the management of complex microservice architectures, providing advanced features such as traffic management, security, and observability. OpenStack, as an open-source platform for building private and public clouds, provides a flexible and scalable infrastructure for running Istio.

However, deploying Istio on OpenStack can be a complex process that requires careful planning and execution. It is essential to ensure that the OpenStack environment is properly configured to support Istio’s requirements and to consider the impact of Istio on application performance and latency, as well as potential security risks.

- Kubernetes Troubleshooting: A Practical Guide - May 21, 2024

- Overview of the OpenStack Documentation - March 18, 2024

- Refactoring Your Application for OpenStack: Step-by-Step - December 27, 2023

)